Cryptography

Overview

Cryptography (from the Ancient Greek κρυπτός, meaning "hidden," and γράφω, meaning "to write" — literally "secret writing") is a branch of mathematics that studies methods of protecting information through mathematical transformations. The primary goals addressed by cryptographic methods are ensuring the confidentiality, integrity, authenticity, and availability of data. Cryptography emerged alongside the development of writing and has since become an indispensable part of modern information networks. The most recent and promising directions in the field are quantum cryptography and post-quantum cryptography.

Cryptography (from the Ancient Greek κρυπτός, meaning "hidden," and γράφω, meaning "to write" — literally "secret writing") is a branch of mathematics that studies methods of protecting information through mathematical transformations. The primary goals addressed by cryptographic methods are ensuring the confidentiality, integrity, authenticity, and availability of data. Cryptography emerged alongside the development of writing and has since become an indispensable part of modern information networks. The most recent and promising directions in the field are quantum cryptography and post-quantum cryptography.

Background

Cryptography originated in antiquity as a means of keeping military, diplomatic, and personal correspondence secret. By the Middle Ages, the first scholarly works devoted to the use and breaking of ciphers had already appeared — among them the writings of Abu Bakr Ahmad ibn Ali ibn Wahshiyya al-Nabati (9th century), one of the earliest books on cryptography, describing several ciphers including polyalphabetic ones; and the first known discussion of frequency cryptanalysis, found in Al-Kindi's A Manuscript on Deciphering Cryptographic Messages. The first European book to describe the use of cryptography is generally considered to be Roger Bacon's 13th-century work The Epistle of Roger Bacon on the Secret Works of Art and Nature and on the Nullity of Magic. The field was further developed by scholars such as Leon Battista Alberti, Johannes Trithemius, and Francis Bacon, though cryptography at that time was essentially a secret art accessible to only a few.

In the 19th century, the foundational principles of scientific cryptography — still in use today — were established in the works of F. Kasiski and A. Kerckhoffs. Public interest in cryptography was also sparked by popular fiction, including Edgar Allan Poe's The Gold-Bug and Arthur Conan Doyle's The Adventure of the Dancing Men.

Cryptography originated in antiquity as a means of keeping military, diplomatic, and personal correspondence secret. By the Middle Ages, the first scholarly works devoted to the use and breaking of ciphers had already appeared — among them the writings of Abu Bakr Ahmad ibn Ali ibn Wahshiyya al-Nabati (9th century), one of the earliest books on cryptography, describing several ciphers including polyalphabetic ones; and the first known discussion of frequency cryptanalysis, found in Al-Kindi's A Manuscript on Deciphering Cryptographic Messages. The first European book to describe the use of cryptography is generally considered to be Roger Bacon's 13th-century work The Epistle of Roger Bacon on the Secret Works of Art and Nature and on the Nullity of Magic. The field was further developed by scholars such as Leon Battista Alberti, Johannes Trithemius, and Francis Bacon, though cryptography at that time was essentially a secret art accessible to only a few.

In the 19th century, the foundational principles of scientific cryptography — still in use today — were established in the works of F. Kasiski and A. Kerckhoffs. Public interest in cryptography was also sparked by popular fiction, including Edgar Allan Poe's The Gold-Bug and Arthur Conan Doyle's The Adventure of the Dancing Men.

By the turn of the 20th century, all the world's major powers had developed their own encryption methods as well as "black chambers" — units within diplomatic or military agencies dedicated to the interception and cryptanalysis of foreign correspondence — which played a decisive role during World War I.

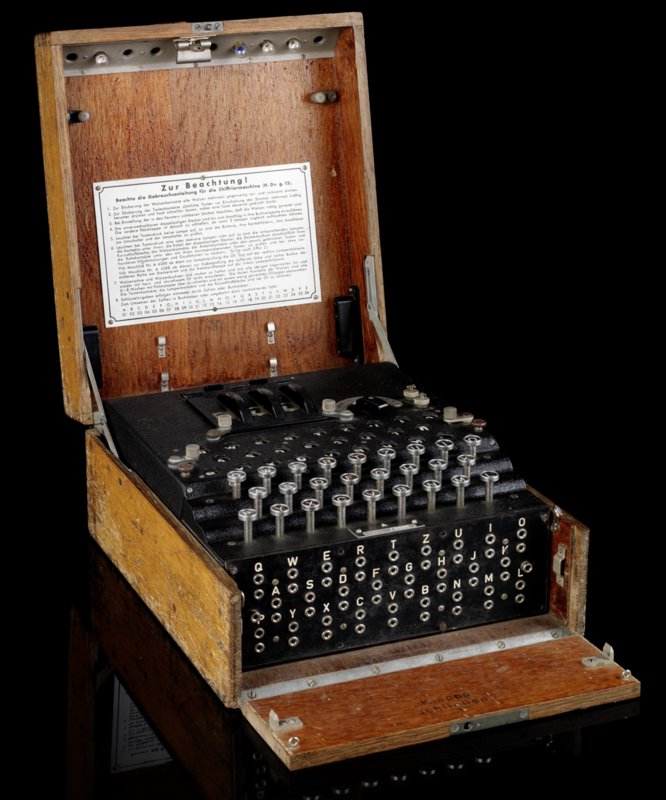

By the outbreak of World War II, the future belligerents had at their disposal both a wide array of field ciphers and the latest secure communications devices — disk and rotor cipher machines.

Through the work of Alan Turing and many other specialists during World War II, the first automated cryptanalysis methods were developed — methods that ultimately led to the breaking of the Enigma cipher machine used by Nazi Germany.

Cryptography's emergence as an independent scientific discipline in the mid-20th century with the publication of works by V.A. Kotelnikov and Claude Shannon, who established the information-theoretic foundations of cipher security.

By the outbreak of World War II, the future belligerents had at their disposal both a wide array of field ciphers and the latest secure communications devices — disk and rotor cipher machines.

Through the work of Alan Turing and many other specialists during World War II, the first automated cryptanalysis methods were developed — methods that ultimately led to the breaking of the Enigma cipher machine used by Nazi Germany.

Cryptography's emergence as an independent scientific discipline in the mid-20th century with the publication of works by V.A. Kotelnikov and Claude Shannon, who established the information-theoretic foundations of cipher security.

The Enigma cipher machine

A new wave of cryptographic development was driven by the rapid expansion of open communication networks in the 1970s, which led to the creation of the first cryptographic standards (DES, 1976) and an entirely new field — public-key cryptography, based on the computational complexity model of security (W. Diffie, M. Hellman, R. Merkle, 1976; R. Rivest, A. Shamir, L. Adleman, 1977) — that dramatically broadened the scope of cryptographic applications.

Today, cryptographic methods are used everywhere — often without the user even noticing. For instance, in order for you to read these lines, your browser and our server must jointly perform a complex procedure of authentication, key agreement, and decryption, known as the HTTPS protocol.

Today, cryptographic methods are used everywhere — often without the user even noticing. For instance, in order for you to read these lines, your browser and our server must jointly perform a complex procedure of authentication, key agreement, and decryption, known as the HTTPS protocol.

The most widely used algorithms in modern cryptography fall into the following classes:

- symmetric cryptography, in which the encryption and decryption keys are identical: block and stream ciphers (the most widely used solutions for ensuring the confidentiality and integrity of data at rest and in transit);

- asymmetric cryptography, in which the encryption and decryption keys differ but are related by a computationally hard mathematical relationship: public-key encryption and key agreement (typically used to establish a symmetric encryption key), and digital signatures (the cryptographic equivalent of a handwritten signature, enabling verification of document authorship);

- hash functions, used to map an arbitrary-length input to a fixed-length output, enabling data integrity verification and improving the efficiency of digital signature algorithms.

These foundational algorithms form a set of cryptographic primitives from which more complex cryptographic protocols are built — for example, protocols for comprehensive data protection in transit (TLS, IPSec), distributed ledger systems, remote access, and many other applications.

A fundamental characteristic of the computational complexity paradigm of cryptographic security is that recovering the private key of an asymmetric cryptographic scheme is theoretically always possible — but in practice would require a prohibitive amount of computational resources.

Advances in computing theory have led to the emergence of quantum computers. The computational power of quantum computers is growing rapidly, dramatically accelerating the solution of certain problems. According to projections from the research community, by around 2028–2030 adversaries could use quantum computers to break the most widely deployed public-key cryptosystems — making the quantum threat an increasingly real and present danger. In response, quantum cryptography and post-quantum cryptography have been developed as countermeasures.

A fundamental characteristic of the computational complexity paradigm of cryptographic security is that recovering the private key of an asymmetric cryptographic scheme is theoretically always possible — but in practice would require a prohibitive amount of computational resources.

Advances in computing theory have led to the emergence of quantum computers. The computational power of quantum computers is growing rapidly, dramatically accelerating the solution of certain problems. According to projections from the research community, by around 2028–2030 adversaries could use quantum computers to break the most widely deployed public-key cryptosystems — making the quantum threat an increasingly real and present danger. In response, quantum cryptography and post-quantum cryptography have been developed as countermeasures.

State-of-the-art and future-proof cryptography for your business:

The time to begin piloting post-quantum cryptography solutions for protecting long-lifecycle data is now. This will allow you to prepare your IT infrastructure for a seamless, large-scale migration to post-quantum cryptography ahead of the formal adoption of standards by national regulators.

The time to begin piloting post-quantum cryptography solutions for protecting long-lifecycle data is now. This will allow you to prepare your IT infrastructure for a seamless, large-scale migration to post-quantum cryptography ahead of the formal adoption of standards by national regulators.