Quantum Computers

Overview

Quantum computers are a new generation of computing devices that exploit the effects of quantum mechanics. By executing specialized quantum algorithms, such devices can significantly outperform classical semiconductor-based computers on certain classes of problems. In particular, an adversary executing Shor's quantum algorithm on a sufficiently capable quantum computer would be able to efficiently break the most widely used public-key cryptosystems currently in deployment.

Quantum computers are a new generation of computing devices that exploit the effects of quantum mechanics. By executing specialized quantum algorithms, such devices can significantly outperform classical semiconductor-based computers on certain classes of problems. In particular, an adversary executing Shor's quantum algorithm on a sufficiently capable quantum computer would be able to efficiently break the most widely used public-key cryptosystems currently in deployment.

Background

Modern technologies — computing technologies in particular — are approaching the limits of miniaturization imposed by the size of atoms. Classical models are inadequate for describing processes at the atomic scale, which led to a revolutionary new understanding of the laws of nature — quantum physics. In turn, advances in quantum physics throughout the 20th century made it possible to develop the concept of quantum computing, first proposed in the early 1980s by Yu. Manin, R. Feynman, and P. Benioff.

Unlike a classical computer, which operates on elementary bits — each of which can take only one of two values, 0 or 1 — a quantum computer operates on elementary quantum states known as qubits. A qubit has two basis states and can exist in a state that is a linear combination of those basis states with complex coefficients.

A simplified picture of quantum computation works as follows: an initial state is encoded into a system of qubits. The state of the system is then transformed through a sequence of unitary operations, each performing a specific logical operation, as the quantum program executes. Finally, the state of the system is measured — and this measurement constitutes the output of the quantum program.

Using elementary quantum operations, it is possible to simulate the behavior of the classical logic gates from which conventional computers are built. As a result, a quantum computer is in principle capable of solving any problem that a classical computer can — including problems in cryptanalysis.

Conversely, the behavior of a quantum computer can be emulated using classical hardware — for example, using graphics processing units (GPUs) — but such emulation is feasible only for systems with a small number of qubits, due to the exponential growth in required computational resources.

In certain cases, the use of quantum algorithms can yield dramatic gains in computational efficiency. The most critical application of quantum computing from an information security perspective is Shor's algorithm for integer factorization and discrete logarithm computation, which would enable an adversary to efficiently break the majority of public-key cryptosystems currently in use.

Modern technologies — computing technologies in particular — are approaching the limits of miniaturization imposed by the size of atoms. Classical models are inadequate for describing processes at the atomic scale, which led to a revolutionary new understanding of the laws of nature — quantum physics. In turn, advances in quantum physics throughout the 20th century made it possible to develop the concept of quantum computing, first proposed in the early 1980s by Yu. Manin, R. Feynman, and P. Benioff.

Unlike a classical computer, which operates on elementary bits — each of which can take only one of two values, 0 or 1 — a quantum computer operates on elementary quantum states known as qubits. A qubit has two basis states and can exist in a state that is a linear combination of those basis states with complex coefficients.

A simplified picture of quantum computation works as follows: an initial state is encoded into a system of qubits. The state of the system is then transformed through a sequence of unitary operations, each performing a specific logical operation, as the quantum program executes. Finally, the state of the system is measured — and this measurement constitutes the output of the quantum program.

Using elementary quantum operations, it is possible to simulate the behavior of the classical logic gates from which conventional computers are built. As a result, a quantum computer is in principle capable of solving any problem that a classical computer can — including problems in cryptanalysis.

Conversely, the behavior of a quantum computer can be emulated using classical hardware — for example, using graphics processing units (GPUs) — but such emulation is feasible only for systems with a small number of qubits, due to the exponential growth in required computational resources.

In certain cases, the use of quantum algorithms can yield dramatic gains in computational efficiency. The most critical application of quantum computing from an information security perspective is Shor's algorithm for integer factorization and discrete logarithm computation, which would enable an adversary to efficiently break the majority of public-key cryptosystems currently in use.

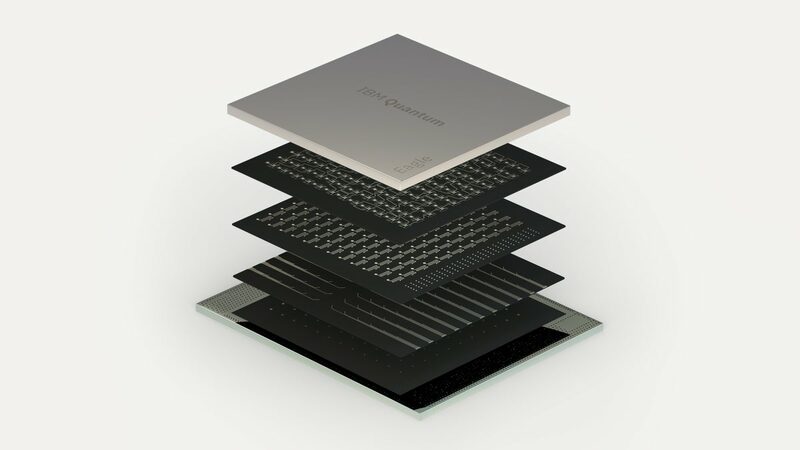

Building a "full-scale" quantum computer capable of solving real-world problems remains a fundamental scientific and engineering challenge. As of 2022, working research prototypes with around 100 entangled qubits existed — for example, the IBM Eagle quantum processor, introduced in 2021, features 127 qubits. By 2023, more powerful systems had emerged: IBM Condor with 1,121 qubits (2023) and D-Wave Advantage 2 with 1,200 qubits (2024). For a number of computationally hard problems, "quantum supremacy" has already been demonstrated — meaning a quantum system has effectively solved a problem previously considered intractable for classical computers.

Quantum computing is actively advancing in the Russian Federation as well. On July 30, 2020, the Presidium of the Government Commission on Digital Development approved the "Quantum Computing" Roadmap for the development of this high-technology domain, developed with the participation of specialists from the Russian Quantum Center. The Roadmap focuses on addressing research and engineering challenges in quantum computing — including the development of quantum processors based on various hardware architectures — and on building the corresponding scientific and technological ecosystem.

Quantum computing is actively advancing in the Russian Federation as well. On July 30, 2020, the Presidium of the Government Commission on Digital Development approved the "Quantum Computing" Roadmap for the development of this high-technology domain, developed with the participation of specialists from the Russian Quantum Center. The Roadmap focuses on addressing research and engineering challenges in quantum computing — including the development of quantum processors based on various hardware architectures — and on building the corresponding scientific and technological ecosystem.

IBM Eagle quantum processor © IBM, 2021

Current quantum computing hardware typically relies on one of the following underlying technologies:

- solid-state quantum dots based on semiconductor materials;

- superconducting circuit elements;

- ions in Paul traps (or atoms in optical traps);

- hybrid approaches combining multiple technologies;

- photonic (optical) technologies.

A separate category consists of special-purpose quantum computing devices, such as quantum simulators designed to address specific problems in modern chemistry, and D-Wave's multi-qubit systems, which are effective only for certain optimization problems and were previously considered unsuitable for practical cryptanalysis. However, subsequent research has shown that such systems can be employed in hybrid factorization algorithms. Research in this area is ongoing, including work by specialists at QApp and the Russian Quantum Center.

The primary challenges in building quantum computers are:

Taking into account the overall trajectory of technological development, as well as the year-on-year growth in interest — and investment — from businesses, industry, and governments in the quantum computing space, QApp experts consider it highly likely that a quantum computer capable of effectively performing practical cryptanalysis will emerge within the 2028–2030 timeframe.

The primary challenges in building quantum computers are:

- inherent instability of quantum systems;

- susceptibility to environmental interference;

- accumulation of errors during computation;

- difficulty in reliably initializing qubit states;

- challenges in scaling to large numbers of qubits.

Taking into account the overall trajectory of technological development, as well as the year-on-year growth in interest — and investment — from businesses, industry, and governments in the quantum computing space, QApp experts consider it highly likely that a quantum computer capable of effectively performing practical cryptanalysis will emerge within the 2028–2030 timeframe.

How to effectively counter the quantum threat today?

To effectively counter the quantum threat to your business, the right approach is to adopt solutions based on post-quantum cryptography. QApp has the expertise and proprietary developments needed to protect your valuable data against attacks carried out using a quantum computer.

To minimize the business risks associated with advances in quantum computing, you can:

To effectively counter the quantum threat to your business, the right approach is to adopt solutions based on post-quantum cryptography. QApp has the expertise and proprietary developments needed to protect your valuable data against attacks carried out using a quantum computer.

To minimize the business risks associated with advances in quantum computing, you can:

- Learn more about the quantum threat and the most relevant protection strategies for your specific industry;

- Conduct an audit of your organization's current cybersecurity infrastructure, identify the most suitable quantum-threat mitigation solutions, and develop a comprehensive protection strategy;

- Conduct a pilot deployment of quantum-resistant solutions and scale up the implementation.